This post originally appeared on The Sieve.

In 1987, Wendell Berry complained in a delightfully grumpy essay that “a number of people” were advising him to buy a computer. This new machine would, his friends and colleagues surely believed, free the great writer from the drudgery of composing by hand. It could save his drafts, allow him to cut and paste, and maybe even spell-check. So did Berry join his colleagues and leap into the digital age?

Absolutely not. He could write just fine with pencil and paper, he explained. When he finished a draft, his wife Tanya typed—and edited—his manuscript on a Royal standard typewriter (a practice that did not sit well with some feminists). A computer would disrupt the Berrys’ finely tuned “literary cottage industry”—a highly productive industry that has led to over 50 (and counting) books and collections of poems and essays. It’s easy to see why Berry would be wary.

I think today’s writer has far more to be wary about than Berry did, back when computers were little more than glorified typewriters. Today’s machines are spigots at the end of a pipeline delivering an endless stream of information from the outside world. Each news update and email and tweet has the potential to derail a writer’s train of thought—and yet we let them in. Do we really believe this has no effect on our ability to write?

In fact, scientists are amassing a mountain of evidence that computer-based distractions can devastate mental performance. In a recent New York Times article, journalist Bob Sullivan and scientist Hugh Thompson described a study in which they gave subjects an exam and then told some participants they would be interrupted by an instant message. The subjects who were interrupted performed 20% worse than uninterrupted test-takers. “The results,” the authors wrote, “were truly dismal.”

And it’s not just exam-taking that’s affected—it’s all kinds of mental activity. Last week on the radio program Science Friday, Stanford University psychologist Clifford Nass told host Ira Flatow that constant multitaskers are worse at focusing, thinking creatively, and even multitasking itself. “The research is almost unanimous…that people who chronically multitask show an enormous range of deficits,” Nass said. “They’re pretty much mental wrecks.”

More worrisome, these distractions also seem to have the power to make our brains want more of them. A growing body of literature suggests Internet applications harness the same neurological reward pathways that addictive drugs have long exploited. Scientists have recently identified a phenomenon known as “phantom vibration”—the feeling that a cell phone is vibrating in response to an incoming message, even when it isn’t. As someone who compulsively looks for the little parenthetical “(1)” after the word “Inbox” on my web browser, I have to say this kind of research is confirming what I know all too well from experience.

Yet despite the mounting evidence, science writers—and probably many others—face increasing pressure to embrace every new social media platform. I don’t think I’ve ever been to a writers’ conference that didn’t include, in some form, a panel of writers selling their colleagues on the glories of Facebook, Twitter, Google Plus and the like. If we don’t expose ourselves to the endless volley of information bomblets, we’re told we’ll miss out on the all-important “conversation.” I sometimes wonder if I’m the only one who finds this a rather poor substitute for what we now must call “in-person” conversation.

I see from Berry’s essay that this pushing of addictive Internet technologies by their users is nothing new. Strangely, it reminds me of the peer pressure teenagers supposedly exert on each other to try cigarettes, booze, or other substances—right down to the “everybody’s doing it” message. Given the known risks, I would suggest we approach addictive technologies with some of the same caution we apply to those drugs. Perhaps we need a new DARE for the Internet age.

That said, I am not about to leave behind the Internet and its attendant technologies. Indeed, for a relatively new (hopefully up-and-coming) writer like me, shunning email, blogs, and social media would be professional suicide. And I appreciate that these technologies allow me to share work, like this essay, with colleagues, editors, and readers around the world. (Even Berry has lately admitted borrowing a friend’s computer to send his work to publishers.) I can’t imagine going back to pencil and paper and snail mail, nor do I wish to. I’d do as well trying to get around by horse.

But I do reject what Berry calls “technological fundamentalism”: the uncritical belief that every new technology will improve our lives. I would argue that a device or service should be judged not by whether it’s novel or whether everybody else is using it, but by whether it actually helps us do our jobs better. While social networking sites may aid writers in publishing and promoting themselves, which are certainly necessary parts of the business, they seem to be detrimental to the writer’s most fundamental task, which, I would argue, is thinking. Deep, uninterrupted thinking.

Despite all scientists have learned about the brain, thinking remains mysterious and idiosyncratic. It seems to resist being forced or hurried. But clearly it can be easily sabotaged. Let’s take a stand against the proliferating technologies that fragment our time and our thoughts. Let’s recognize that our most important tool is not the digital computer or the wireless router but the analog brain.

What do you think—have we let Internet technologies intrude too much in our lives? How do you manage the distraction deluge? Leave a comment—or better yet, tell me in person!

Enjoyed this very much, thanks for writing it!

As a fun aside, you might be interested to know that Tanya still serves as Wendell’s transcriber-in-chief. She recently wrote me a letter on his behalf, politely explaining that he no longer endorses books. And yes, the letter was hand-written :^)

Great points– I think it is very hard to concentrate on writing with the proliferation of these technologies.

I would say that those of us who are engaged in international work, need to stay connected to colleagues around the globe, which is virtually impossible without the use of these technologies

Agreed. I refuse to get a smartphone. That’s where i draw the line. I go into a quiet little panic every time I hear the phrase “Google Glass.” However, I am very pessimistic about society’s ability or desire to refuse any technology completely.

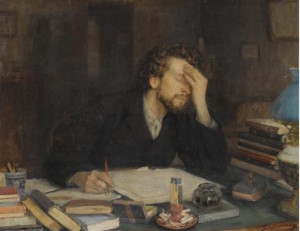

Great essay, Gabe! I love the portrait of you as a young writer. My tactic is to keep reminding myself that writing is about thinking, not typing words. When I print a draft and scribble in the margins on the metro, or step away from the screen to turn a sentence over in my mind, the final piece always comes out better.